Generative AI: A Glossary

Understanding how Generative AI (GenAI) works can be challenging in an environment of hype-driven media representations, and a lack of understanding can lead to uncritical adoption of and trust in GenAI output. BC Libraries staff hope this glossary will help you develop and maintain critical thinking about GenAI tools.

The terms are arranged roughly by their ubiquity. Terms at the top of the list are more common, and terms toward the bottom of the list are more technical and/or recent, or appear less commonly in mass media.

Artificial Intelligence (AI)

Practitioners tend to use the phrase “machine learning,” not “AI,” which has its roots in marketing. The term was created in the mid 1950’s for use in a grant application for a small group to meet at Dartmouth University to discuss ways to create human intelligence with computers, a task they thought could be completed quickly, portending many such claims about AI over the years. Commonly, “AI” is used imprecisely as an umbrella term to refer to everything from as-yet unrealized human-like machine intelligence, to GenAI tools like ChatGPT or Gemini, to specialized uses in grammar checking software, language translation, or even more specialized technical applications in medicine and other sciences. When a medical technician is using AI to compare CAT scans across thousands of patients to help doctors diagnose, it’s very different from a one-size-fits-all tool like ChatGPT.

Generative AI (GenAI)

Generative AI uses neural networks and statistical modeling based on large datasets to generate output such as text or images. Generative models are distinct from discriminative models, which statistically analyze existing datasets to identify similarities and differences; these models tend to be used for tasks like image classification in medicine or law enforcement. Generative and discriminative models have also been combined into Generative Adversarial Networks (GANs) in order to train generative models to increase their accuracy. Generative models need considerably more computing power than discriminative ones because they generate huge amounts of randomized options to process.

ChatBot

Any computer entity able to converse with a human. The first was ELIZA, a chatbot created by Joseph Weizenbaum in the 1960’s, which Weizenbaum later characterized as “deception”, and called for limits on such tools. In Fall 2022 OpenAI publicly released the Generative (see GenAi above) Pre-trained Transformer (see Transformers below), ChatGPT, which made chatbots seem much more human-like.

Large Language Model (LLM)

Unstructured language data collections with billions of data points, used for “training” Gen AI chat, at first composed mostly of language scraped from websites, but more recently including the digitized copyrighted content of newspapers, magazines, scholarly journals, and books, some with contractual agreements, many without. The inclusion of copyrighted content without permission or payment has led to numerous lawsuits. Though many in the GenAI industry thought increasing the size of LLMs would lead to increasingly greater accuracy, those increases seem to have hit a threshold. Philosophers have doubts about epistemological assumptions behind LLM-based GenAI, with at least one labeling it “bullshit.”

Prompt Engineering

A process for crafting GenAI prompts to influence output. Often, seemingly minor or strange differences in prompts can create major differences in output, such as prompting the GenAI as if it’s a Star Trek onboard computer. Calling it “engineering” might be a misnomer, as the language skills involved in creating prompts might be closer to those taught in humanities or creative writing than in engineering. Google’s NoteBook LM editorial director has said people with humanities backgrounds are necessary for GenAI projects.

Hallucination

Realistic but inaccurate information generated by GenAI chat. Among librarians, fabricated article citations are one of the most common instances. Hallucinations are a great example of how GenAI chat works: it generates text based on probability. In other words, it creates likely text; it doesn’t search around for the exact whole text string of a citation the way a database or Google does. Every string of text in GenAI chat output is invented, and because it’s based on statistical models, the inventions are all quite plausible. It’s not good at precision, even according to researchers at OpenAI: the exact words and word order of a particular citation is quite rare, or in other words, improbable. Some academically-oriented tools try to overcome this baked-in inaccuracy with RAGs (See Retrieval Augmented Generation, below) or more recently, Agents or Agentic AI (see below).

Anthropomorphism

The tendency to use language that attributes human intelligence inanimate things, and in this case to AI models. Words like “training,” “thought,” “reasoning,” and “hallucination” anthropomorphize statistical, mathematical processes. Joseph Weizenbaum called it the ELIZA effect: the tendency of people to attribute human attributes to computers; he also thought it could spell disaster for humanity. The late philosopher Daniel Dennett, seeing the early examples of GPT-created human images and videos, labeled them “counterfeit humans,” and called for developing a legal framework with punitive damages equivalent to those for counterfeit money.

Bias

LLMs invariably include the biases of the humans who created the texts ingested into them. Because companies haven’t been transparent about what they’ve included in LLM’s, we can only guess at the biases that chatbots will reproduce. ChatBot programmers can correct bias (or add bias) with parameters (see Parameters below), but without the transparency of open weights (see Open Weights, below), it’s rarely clear what those parameters are.

This might be a good time to be open about my own biases: I’m skeptical of AI hype, and I’m yet to be convinced that the alleged benefits of GenAI are worth the ethical, social, and environmental tolls they’ve already taken and which are projected increase considerably in the near future.

Artificial General Intelligence (AGI)/Artificial Super Intelligence (ASI)

AGI was coined to distinguish a broad, human-like intelligence from narrowly-purposed AI tools such as grammar correction, image sorting and matching, or type-ahead search. Sam Altman, the OpenAI CEO, has for several years justified billions in industry funding for GenAI as a major step towards AGI, but has recently qualified & downplayed that claim. Also, it’s wise to take anything Sam Altman says with a grain of salt, according to Karen Hao. (Empire of AI : Dreams and Nightmares in Sam Altman’s OpenAI at BC Libraries) Many AI proponents, cognitive scientists, and philosophers who study the mind also point out that intelligence exists outside the realms of language; hence, basing AGI on language is a mistake to begin with.

Reasoning Model, aka Chain-of-Thought

Prior to reasoning models, chatbots produced answers to prompts based only on pre-training. A reasoning model uses both pre-training and additional analysis between a user’s prompt and its output, weighing different responses against each other. In some models, users can opt to observe the chain of thought. The intent was to improve accuracy and provide a monitoring window into a model’s “reasoning,” though it’s unclear that window accurately represents reasoning. Because reasoning models use considerably more computing time, they cost more. Some GenAI chat companies offer reasoning models for additional fees, some per month and some per token-use. The BC instance of Gemini-3 currently offers Chain-of-Thought, branded as “Thinking with 3 Pro.”

Structured/Unstructured data

Structured data has a defined schema, such as in a spreadsheet or database, where data is in named fields, which means one can do precise analysis or locate exact pieces of data. Unstructured data lacks a schema. If you were to list all of your friends’ and families’ names and birthdates in no particular order, that’s unstructured data. LLM’s are unstructured data, with words numbering in the trillions, which means the precision of GenAI trained on them is limited to the statistical likelihood of word combinations. After training, what a chatbot retains are billions of combinations of statistical operations (see Parameters, below) for different word combinations; that’s a structure in a very rough sense, but not a sufficient structure for high accuracy, especially with less probable word combinations, such as specific titles. One of the major challenges of GenAI chatbots is to produce specific, accurate answers in spite of using a model ill-suited to that goal. Solutions tend to include adding layers that use considerable additional computing power, such as an additional “reasoning” layer (chain-of-thought, above), retrieval-augmented generation, a larger context window, and agents (see below for all three).

Neural Network, or Artificial Neural Network (ANN)

A machine-learning model loosely based on biological neural networks. Neural networks have been in development for many years. Generative AI involves many neural networks working together simultaneously, which became possible as the price of computer memory fell and cloud services became much more powerful.

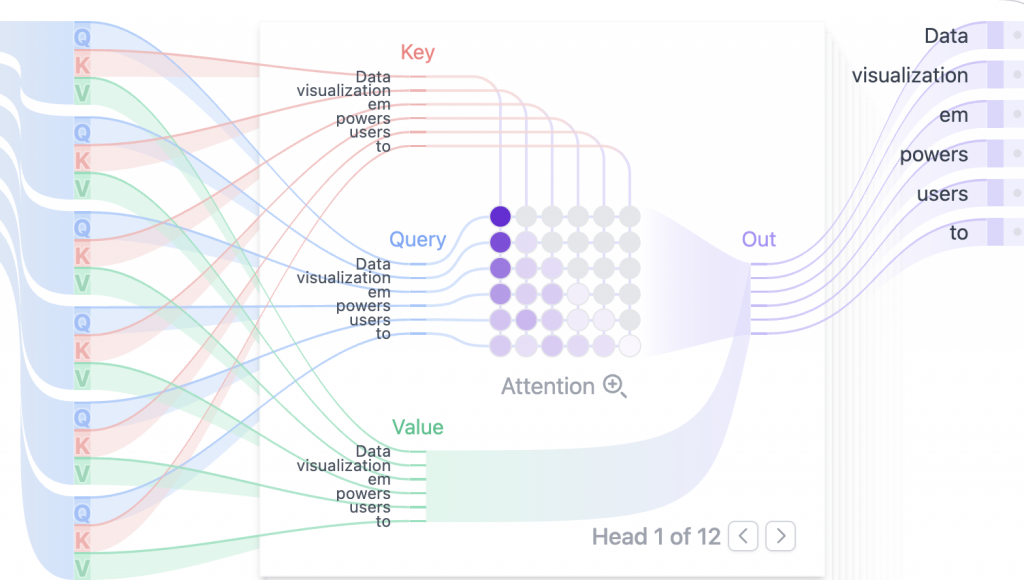

Transformer

The “T” in GPT: Generative Pre-trained Transformer. Transformers were the breakthrough technology that turned neural networks from a curiosity to the chat tool that OpenAI released in the fall of 2022. A very brief description: multiple layers of interacting neural networks simultaneously work on predicting the most probable next character-strings in a sentence, and to construct sentences and paragraphs that resemble human-created text. It’s much easier to interactively visualize this activity than to describe it.

Retrieval-Augmented Generation (RAG)

When a chatbot can draw on an additional data-source (such as a collection of academic papers or all the course material of a college class), and weight that data more than the LLM data on which it was trained, it can more dependably generate text related to that data source. NotebookLM, supported by BC, can help you create a RAG chatbot. Note: Be careful when using other RAGs; NotebookLM protects user privacy. Other RAGs may permit access to user-supplied data in order to add it to LLMs. BC has an agreement with NotebookLM that it will not.

Agent/Agentic AI

In the past year many chatbot models have introduced “AI agents,” which are routines that the chat itself can call (when given permission) to open spreadsheets, documents, search engines, or other apps, and insert text, formulas, search queries, etc… . An AI agent that can open and search, say, Google Scholar, could resolve many of the hallucinated citation problems. “Agentic” AI would go step further: an AI that acts something like a manager that employs multiple agents in order to carry out complex tasks with minimal human involvement, such as, say, searching Google for data, adding that data to a spreadsheet in which it sets up field names, and outputting results of a pivot table to answer a user prompt.

Open-source

A long-standing tradition of sharing source code openly with other developers. GitHub is a major platform for sharing open-source code, and Stack Overflow has long been a site for sharing coding knowledge. One reason ChatBots can be helpful in writing code is their access to sites like GitHub and Stack Overflow; a problem is that GenAI chatbots don’t distinguish between non-working code submitted by users in order to ask questions, and working code.

So far, none of the biggest LLM chat models have been shared openly in this way, in spite of the name of its first developer, OpenAI. Some are partially open-source (see Open-weights, below).

Open-weights

A few LLM models (Meta’s Llama, Deepseek, Qwen, and very recently OpenAI) have started to openly share the weighting parameters (not the raw LLM data itself), which means developers can download the model file and customize weights in their own locally-hosted version (see Parameters, below).

Token

The smallest unit of data in an LLM: a number that represents a word, a prefix or suffix, or punctuation. Initially, before ChatGPT-3 was released, LLMs contained billions of tokens from relatively limited datasets. Today, most LLMs are trained on trillions of tokens. Everything that goes on “inside” a GenAI chat tool is carried out as statistical operations on numerical tokens which are then returned to language as output for human users.

Temperature

An parameter that can adjust an LLM-based GenAI chat model’s output on a continuum from random (hot) to high probability (cool). A “hot” chatbot might have less predictable and less accurate, but more novel or unusual answers. A “cool” chatbot might be more normatively accurate, but also predictable to the point of cliché and less human-like.

Parameters

Elements of the training process that assign weights (such as temperature) to different inputs, configure biases as a layer over weights, and control the model’s size, shape, resource use, and behavior with hyperparameters. With Open Weights (see above), users can see and customize these parameters. Note: This is a very simplified definition. The (often billions of) parameters that are created as a part of an LLM are the heart of how an LLM-based chatbot generates probabilities for combinations of text strings. The more (and more finely-tuned) parameters in an LLM, the more realistic and (to a point) accurate the output, and the more resource-intensive the training.

Context Window

The number of tokens that the model can consider at one time. A larger context window allows the model to “remember” a longer set of prompts and responses. For example, Gemini-3 has an extended context window of just over a million tokens, which means a novel-sized document could be part of the prompt. Of course, this kind of extension, like many others, takes more computing time and hence uses more resources.